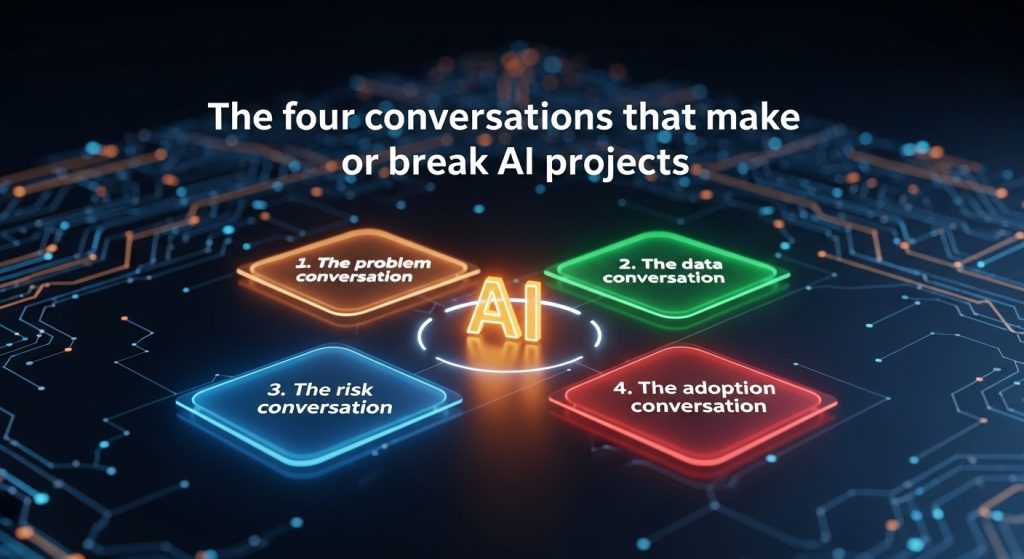

Most AI initiatives struggle not because of the model, but because the right people never had the right conversation at the right time. Tools change, people do not. Good governance starts with clear talk, documented decisions, and steady follow up. The four conversations below are simple by design. Each one fits into a regular meeting, your current documents, and the tools you already own. No new platforms, no jargon, just clarity.

This is the moment a team trades vague ambition for a testable goal. Keep it short, specific, and measurable, then write it where everyone can see it.

Use this script in a meeting:

“State the business problem in one sentence. State the user who owns the pain. State the number we plan to improve. Read it back, and ask if anyone disagrees with the wording.”

Write it down in plain text:

Problem, month end invoice matching takes too long, finance managers spend 12 hours per cycle, target is 6 hours without a rise in error rate.

That one line is a promise. It guides scope, protects the team from shiny distractions, and gives leadership a number to inspect later. If you cannot write a sentence like this, you are not ready to choose a model.

The best time to talk about data is before anyone opens a notebook. The goal is not a perfect catalogue. The goal is a shared view of what exists, who owns it, and what is missing.

Ask three questions, then capture answers in a shared document:

Which sources hold the fields we need, who owns access, what quality risks are known.

If you hear uncertainty, pause the project for a short discovery sprint rather than push ahead and pay for surprises later.

A simple way to record decisions:

Source, AP system export, owner, Finance Ops, refresh, daily at 18, format, CSV, known issue, vendor names have inconsistent casing.

This is enough to start. It is also enough to ask for help if access stalls or a field does not exist.

Risk is not a form to file at the end. Risk is a regular talk about what could go wrong, how likely it is, and how you will contain it. Bring legal, privacy, security, and a business owner into the room early. Keep the talk practical.

Use this agenda:

List three harms that matter to users or customers, accuracy errors, privacy leakage, process failure. For each one, write a small control that fits your current process, a threshold for action, and a person to notify.

A small control can be enough:

For accuracy, sample ten predictions a day, if two or more fail the business rule, roll back to the last safe version and alert the owner.

For privacy, mask direct identifiers in the training copy and store the masking rules beside the data inventory.

For process, write a rollback note that any on call engineer can follow at 2 a.m.

You do not need a large framework to reduce risk. You need a short routine that runs every week.

Adoption is the part most teams skip. The pilot looks good, then nothing changes in daily work. The cure is to plan the human part like a product, clear ownership, simple training, and visible wins.

Start with a change story in four lines:

Who changes, what they stop doing, what they start doing, how the change is measured in their world.

Example, AP analysts stop hand matching vendor names, start reviewing flagged exceptions, success is measured by cycle time and dispute rate on their dashboard.

Plan for a short launch arc:

Week 1, show and tell, five minute demo in a standing meeting.

Week 2, guided use, one manager sits with one user to watch the first run.

Week 3, office hours, a drop in call for questions.

Week 4, publish the first small win, cycle time cut by two hours, error rate steady.

You do not need a gala launch. You need a few steady moments that prove value in the user’s day.

Pick one live initiative. Book four short meetings, one per conversation, thirty minutes each. Use the scripts above. Capture agreements in a shared doc. End every meeting with a single next step, a name, and a date. That is governance. It is also how you build momentum without a parade of new committees.

If you want to add a small layer of automation, create one page in your team’s wiki called AI project decisions, and add four subheadings that match the conversations. When anyone asks where things stand, send that link.

Problem one-liner, fill the blanks:

Problem, [process] takes [x time or money], owner, [role], target, [new number] by [date], no rise in [quality metric].

Data inventory row, fill the blanks:

Source, [system or file], owner, [team], refresh, [cadence], format, [type], known issue, [short note].

Risk control note, fill the blanks:

Risk, [type], control, [check or guard], threshold, [number], action, [rollback or fix], notify, [name or role].

Adoption story, fill the blanks:

Who, [role], stop, [old task], start, [new task], measured by, [metric in the user’s world].

Keep these blocks in one document. Ask teams to update them before the weekly status call. You now have a living source of truth that travels with the project.

AI efforts rise or fall on conversations, not dashboards. When people agree on the problem, the data that matters, the risks that count, and what adoption looks like in a real week of work, the model has a real job to do. That is the heart of AI project governance, and it is available to every team that chooses to talk clearly and write things down.

If you want a printable worksheet that mirrors these four conversations, visit the Resources page on dx.training, or email info@dx.training.

© 2025 Digital Transformation Training. A brand of Artintech Inc. All rights reserved. | Privacy Policy | Terms of Use | Security | Compliance | Accessibility